From Hype to Hesitation: A Longitudinal Analysis of User Sentiments towards AI‑Enabled Fintech Lending Platforms

Автор: Arivazagan Jayabalan, Shahrukh Saleem, Prem Kumar, Sudalaimuthu Shanmugam

Журнал: International Journal of Information Engineering and Electronic Business @ijieeb

Статья в выпуске: 2 vol.18, 2026 года.

Бесплатный доступ

The rise of FinTech lending in India has transformed credit access, yet studies examining customer experiences with artificial intelligence (AI)-enabled FinTech lending platforms remain limited. This study investigates the key drivers of user experience and the evolving sentiment toward AI-enabled lending platforms by analysing online reviews from 2017 to 2024 using LDA topic modelling and lexicon-based longitudinal sentiment analysis. Twelve key topics emerged, revealing significant negative sentiment around customer support, eligibility checks, documentation, repayment, and app trustworthiness. In contrast, app usability and interface design maintained strong positivity, while loan approval and disbursement processes saw declining sentiment. Despite these pain points, overall user experience remained positive, indicating that the perceived benefits such as speed, efficiency, and convenience provided by these platforms outweighed concerns like high interest rates, privacy risks, and poor customer service. The findings highlight a nuanced balance between technological advantages and operational shortcomings, offering insights for improving AI-enabled lending platforms.

Artificial Intelligence, FinTech Lending, Instant Loan, User Experience, Online Reviews, Sentiment Analysis, Topic Modelling

Короткий адрес: https://sciup.org/15020252

IDR: 15020252 | DOI: 10.5815/ijieeb.2026.02.10

Текст научной статьи From Hype to Hesitation: A Longitudinal Analysis of User Sentiments towards AI‑Enabled Fintech Lending Platforms

Published Online on April 8, 2026 by MECS Press

The rapid advancement of Artificial Intelligence (AI) and Machine Learning (ML) in FinTech lending has revolutionized access to credit, particularly through instant loan platforms on mobile apps [1,2]. These AI-enabled instant loan platforms enhance the loan journey globally, including in India, by leveraging technological innovations for alternative credit scoring and underwriting, resulting in quicker approvals and automated risk assessments [3]. Users benefit from smoother navigation and self-service models aided by AI in understanding repayment schedules and financial planning [4,5]. Unlike traditional banks, FinTech operates in a digital-only environment [6], rapidly expanding its customer base and capturing about 87% of the small-ticket personal loan market in India by FY 2024 [7]. However, questions persist about whether these instant loan platforms genuinely improve the borrowing experience or create new frustrations. Although some studies have explored user experiences with AI-enabled lending platforms [8], comprehensive analyses of user sentiments and satisfaction drivers remain limited. To address this gap, we conduct a longitudinal study (2017–2024) of user reviews from ten instant loan apps. Using Latent Dirichlet Allocation (LDA) for topic modeling in combination with lexicon-based sentiment analysis, we examine the evolution of topic prevalence and associated sentiments over time. Unlike previous static analyses, our approach captures temporal shifts in user experience within the AI-enabled lending context and interprets these dynamics through the frameworks of Expectation–Confirmation Theory and Trust Theory. Guided by this framework, the study aims to address the following research questions:

RQ1: What are the key themes in user experiences with instant loan platforms, as expressed in online reviews?

RQ2: What sentiments do users associate with different aspects of instant loan platforms?

RQ3: What are the temporal trends and shifts in sentiments and discussions surrounding various aspects of instant loan platforms, and how do they evolve over time?

2. Literature Review

In India, FinTech lending encompasses both P2P (peer-to-peer) platforms and tech-enabled NBFCs (balance sheet lenders). The latter, though regulated as NBFCs, are classified as FinTechs due to their reliance on AI-enabled processes for underwriting, digital distribution, and automated customer interfaces, which are key indicators of financial innovation [1,9]. While numerous studies have examined user experience on the broader FinTech landscape, including payment systems and mobile wallets [10,11], research specifically focused on FinTech lending platforms remains limited. Among these limited studies, P2P adoption studies dominated the FinTech lending literature [12,13] while a few studies into user experiences in P2P FinTech lending platforms predominantly utilized satisfaction surveys and interviews. In contrast, some researchers have leveraged online reviews to assess overall service quality and user sentiment toward FinTech lending platforms. For instance, emotions from an unstructured dataset of P2P loans were extracted using Non-negative Matrix Factorization (NMF) and explored how individual borrowers could improve the likelihood of their loans receiving funding on P2P platforms [14].

Similarly, user sentiments toward P2P lending platforms in Indonesia were analysed by examining user ratings and sentiment classification. The findings revealed that users prioritized the speed of loan processing, ease of application, and convenience in reviewing the platform[15]. Another study [16] took this further by analysing app reviews from the Google Play Store to explore user sentiments and emotions regarding P2P lending platforms in India, highlighting the pandemic's role in shifting attitudes toward alternative borrowing solutions. However, much of the existing literature focuses on the P2P lending model, while the balance sheet lending model—where loans are sourced directly from lenders' own funds—dominates the FinTech lending landscape in India. Notably, studies examining user experiences and sentiment regarding these instant loan platforms are scarce, specifically from the vantage point of their reliance on AI.

Among the few relevant works, the study by Anil & Mishra [1] examined the role of AI in P2P lending in India through qualitative analysis, addressing how AI enabled features influence lending processes. While this research hinted at user involvement, it did not directly investigate user sentiment towards AI-enabled lending platforms. Another critical study analysed the user reviews of instant loan apps from the Google Play Store between March 2019 and December 2021[6]. Utilizing Latent Dirichlet Allocation (LDA) for text analytics, the study highlighted potential risks associated with instant loan apps; however, it did not assess the evolving sentiment surrounding the topics over time. Recent research underscores the paradox of convenience associated with instant loans, highlighting concerns such as data privacy risks, microloan-induced debt traps, and negative impacts on borrowers' credit scores [17]. Despite these growing concerns, there is a lack of longitudinal studies that systematically analyze user feedback to capture the evolving nature of user sentiments, expectations, and concerns regarding FinTech lending platforms. Previous research has largely depended on cross-sectional surveys or adoption-focused models, which provide only a limited view of postadoption user experiences [18,19,20,21].

To fill this gap, theoretical frameworks such as Expectation–Confirmation Theory (ECT) and Trust Theory offer a solid foundation for examining users’ borrowing experiences over time. ECT, commonly used in information systems and consumer satisfaction research, explains how users' intentions to continue using a service and their satisfaction levels are influenced by the confirmation or disconfirmation of their initial expectations after actual use [22, 23]. This framework is particularly relevant in the context of FinTech lending, as borrowers’ expectations about convenience, transparency, and fairness may change with repeated interactions with instant loan services. Additionally, the Trust Theory provides important insights into how perceptions of app trustworthiness, data security, and institutional reliability affect users' ongoing engagement, particularly in high-risk financial decision-making environments [24,25, 26]. This study leverages Expectation Confirmation Theory (ECT) and Trust Theory to analyze longitudinal usergenerated reviews. This approach captures the evolving dynamics of satisfaction, trust, and perceived risks, offering a theory-informed insight into user sentiments that goes beyond the initial adoption phase.

3. Methodology

The current study adopts text mining approach in assessing user sentiment towards instant lending platforms. The study uses online reviews of FinTech lending apps on Google Play Store website and employs topic modelling and sentiment analysis tools to extract latent topics and its associated sentiment. Our sentiment classification and topic modelling are interpreted in light of ECT and Trust Theory, focusing on expectation disconfirmation and trust-related concerns.

3.1. Data Collection

The data selection criteria focused on instant loan apps that were launched after 2016, each with over 10 million downloads, and exclusively offered personal loans, maintaining this focus through June 2024. Based on our criteria, we identified 10 instant loan apps such as Branch, Cashe, Kissht, MoneyView, MPokket, Nira, Olyv, PayMe, PaySense and Stashfin. We extracted reviews from these apps using R software, covering the period from January 2017 to June 2024. As these apps share similar functionality, we combined the reviews of these apps into a single comprehensive dataset [12, 27]. Each review has a user rating ranging from 1 to 5. The aggregated dataset comprised a total of 476,131 reviews for analysis. This approach supports our primary objective which is to characterise the overall landscape user experience with AI-enabled instant loan platforms, rather than conducting app-level comparisons in terms of business practices or app-specific issues.

3.2. Ethical Considerations

3.3. Data Cleaning and Preprocessing

Since the study focuses on English-language content, non-English reviews were excluded from the dataset. Reviews containing fewer than ten words were also filtered out to ensure meaningful results from topic modelling [30,31,32,33]. Additionally, repetitive entries, ALL-CAPS text, and templated reviews were removed in accordance with best practices for identifying low-quality or artificial content [34]. We also examined the data for temporal bursts, defined as periods with more than 100 reviews per hour, and for rating extremity, where over 90% of reviews in a 24-hour window received the same rating [35]. Two instances of extreme 5-star ratings were identified in September 2020 and May 2021. After a manual inspection, templated reviews during these periods were excluded. Following these filtering steps, a total of 335,183 reviews remained for analysis. Minimal preprocessing was then applied to the text corpus, which included the removal of non-ASCII characters, app names, standard English stopwords, and frequently occurring generic terms such as "app" and "loan."

4. Data Analysis and Results

Our data collection method involved extracting publicly available mobile app reviews from the Google Play Store. We diligently reviewed Google's general Terms of Service [28] and the Google Play Terms of Service [29]. While Google's Terms of Service generally prohibit automated access and scraping of its platforms, we note the absence of a comprehensive public API that allows for large-scale collection of diverse mobile app review data for non-commercial academic research. Consequently, to address our specific research questions and gather a sufficiently broad dataset, we employed a controlled web scraping methodology. Our data collection was conducted with strict adherence to ethical research principles. We ensured that only publicly available information (review text, star rating, and timestamp) was collected. No personally identifiable information (PII) beyond what is publicly displayed was extracted or stored. All collected reviewer identifiers were immediately removed entirely to protect reviewer privacy. Our scraping process was carefully rate-limited to avoid placing undue burden on Google's servers, with requests spaced out to mimic typical user behaviour and prevent service disruption. The collected data is stored securely on password-protected university computer and will only be used for the stated academic purpose.

We acknowledge the inherent challenges in utilizing user-generated content from large platforms, specifically the potential presence of synthetic or 'fake' reviews. While Google employs measures to combat this, complete elimination cannot be guaranteed. Our analysis focuses on aggregate linguistic patterns across the large dataset, which aims to mitigate the impact of individual anomalous reviews. This limitation is further discussed in Section 7 (Limitations and Future Work).

The overall average user rating of the apps is 3.2, indicating positive user sentiment. However, whether the user rating truly reflects the sentiment expressed in the reviews need to be assessed [16,36]. Therefore, we conducted a sentiment analysis of the reviews using VADER (Valence Aware and sEntiment Reasoner), a rule-based sentiment analyser, designed to capture the nuances of social media and informal text [37]. We selected VADER because it is optimized for short, informal texts similar to app reviews and has been widely used in prior research on online review sentiment [12,16,38,39]. Moreover, our current study emphasizes longitudinal trends rather than absolute sentiment precision. Our analysis produced an average sentiment score of 0.195 denoting users’ positive experience with instant loan platforms. To evaluate the alignment between VADER sentiment scores and user ratings, a Spearman’s rank correlation test was conducted [31,40]. The results showed a strong positive correlation (r=0.683, p<0.001), indicating that sentiment scores and user ratings capture similar sentiment patterns.

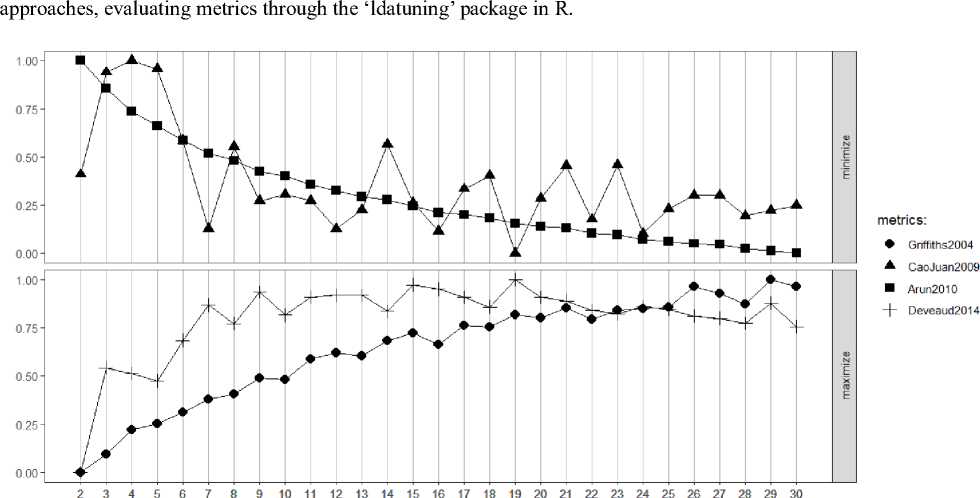

After alignment checking, we conducted topic modelling on the preprocessed corpus using Latent Dirichlet Allocation (LDA), a generative probabilistic model used for discovering the underlying topics in a collection of documents [41]. ‘TopicModels’ and ‘LDA’ packages in R were used to derive latent topics [42,43] . Determining the optimal number of latent topics ( k ) is crucial in LDA, with some scholars employing statistical methods based on metrics such as Arun2010, CaoJuan2009, Griffiths2004 and Deveaud2014 [44]. Whereas, some studies suggest estimating optimal topics based on semantic coherence and exclusivity [45,46]. In our study, we utilized both

number of topics

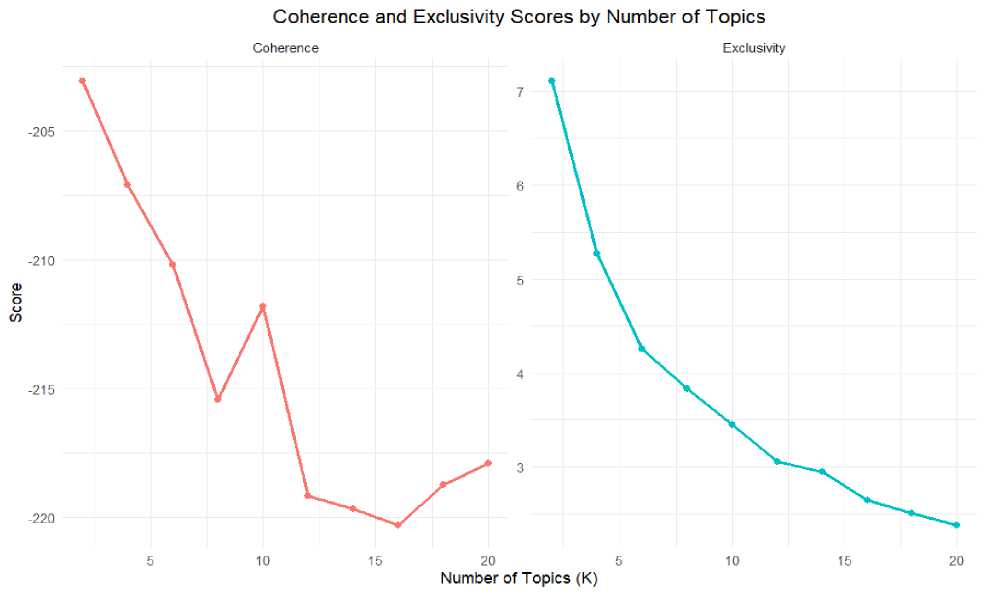

Fig. 2. Coherence and Exclusivity using UMass Coherence metric

Topic coherence was first evaluated using four established metrics — Arun2010, CaoJuan2009, Griffiths2004, and Deveaud2014 — to identify the optimal number of topics. As shown in Fig.1, these metrics collectively indicated stabilization or diminishing returns beyond approximately k = 10, suggesting an optimal topic range between 10 and 19 [31,47]. Within this range, we fitted several candidate models (e.g., k = 8, 10, 12, 14, etc.) using Gibbs sampling with 1000 iterations. UMass coherence and exclusivity were subsequently computed to compare candidate LDA models [48]. Fig.2 reveals that coherence peaked at k = 12 before plateauing, while exclusivity steadily declined with increasing k indicating overlapping of topics. Hence, k = 12 was selected as it best balances semantic coherence and topic distinctiveness. Furthermore, this choice was validated by a qualitative examination of top terms and representative documents, which confirmed that the 12-topic model yields the most interpretable and well-differentiated thematic structure [49].

Each topic was assigned a name based on the logical relationships among the top 20 terms identified by the model [49]. The topic names were assigned based on the consensus of two domain experts. This practice is consistent with established methodologies in previous research [37,38], wherein topic interpretation frequently depends on the judgment of researchers or experts to ensure semantic validity and contextual relevance.

Table 1. Top 10 terms of the topics

|

Topic |

Topic_Name |

Top_Terms |

Propor-tion |

Description |

|

1 |

Customer Support |

support, customer, email, response, service, reply, team, contact, number, issue |

9.36 % |

The responsiveness of customer support team and users’ interaction with customer support to resolve issues. |

|

2 |

Documentation & Verification |

document, apply, upload, process, application, submit, bank, email, verification, pending |

9.07% |

Relates to paperwork required when applying for a loan, uploading documents, and the verification process. |

|

3 |

App Functionality & Overall Experience |

easy, helpful, service, process, recommend, user, friendly, reliable, simple, interface, |

10.38% |

Focuses on how user-friendly the app is, and its features that make the process smooth and ease of navigation as well as users’ overall experience in loan journey. |

|

4 |

Account Management |

emi, payment, account, cibil, debit, update, score, close, auto, deduct |

6.90% |

Relates to managing EMIs, updating account details (e.g., bank/CIBIL-linked auto-debit), and resolving payment errors. |

|

5 |

Credit Limit Offered |

limit, increase, credit, repayment, score, month, decrease, helpful, line, approval |

6.17% |

Deals with credit limit increase, and issues with repayment affecting their limits. |

|

6 |

Approval & Disbursement Process |

account, credit, approval, apply, process, disburse, bank, hour, transfer, application |

8.68% |

Shows how quickly loans are approved and the amount loan is transferred to users’ bank account. |

|

7 |

Eligibility Assessment |

apply, month, offer, reject, application, eligible, wait, disappoint, tell, reason |

8.05% |

This is about loan applications being rejected or accepted based on eligibility criteria determining loan approval |

|

8 |

Debt Collection Practices |

contact, people, fraud, payment, number, agent, rbi, family, person, threat |

6.72% |

Relates to the collection of delayed or defaulted EMI payments with aggressive tactics. |

|

9 |

App Trustworthiness |

waste, application, fake, approval, data, fraud, collect, apply, eligible, useless |

8.49% |

This is about the legitimacy of the apps and concerns over data privacy and fake approvals |

|

10 |

Interest Rate & Charges |

interest, rate, high, charge, process, fee, repayment, tenure, compare, extra |

7.90% |

Focuses on the high interest rate and charges as well as the loan tenure. |

|

11 |

Emergency Financial Support |

application, helpful, service, need, emergency, situation, urgent, financial, problem, useful |

10.91% |

Discusses about the quick access to funds in emergencies and usefulness of the app |

|

12 |

Technical Aspects & Account Accessibility |

account, login, issue, phone, unable, solve, error, update, pan, otp |

7.38% |

Relates to the technical glitches like login problems, OTP delays, or app errors that hinder access to accounts. |

-

4.1. Trend Analysis of Topic Prevalence and Sentiment Polarity

While user ratings provide a general indication of satisfaction, we used VADER sentiment scores for determining the topic sentiment as they effectively capture nuanced sentiment intensity and contextual information within textual reviews [39]. As all topics are not equally important, weighting sentiment scores by topic proportion ensures that topics with higher relevance in the data have a greater influence on the overall sentiment assessment. Sentiment polarity scores, S(T k ) , were calculated for each topic using a weighted average of document sentiment scores, where the weights were the document contribution probabilities derived from the LDA model [52] (1).

S(Tk) =

H^i Oijc * Sentiment (Dj)

Z l=iel,k

Where, Tk = Topic k

Di = Document i

Sentiment(Di) = Sentiment Score of document i

6i k = Contribution probability of document i to topic k n = Total number of documents contributing to topic k

The calculated weighted average sentiment of each document is presented in Table 2. To better understand the prevalence of various topics and the sentiment expressed towards them, Fig.3 presents a visualization of the relationship between topic proportion and average sentiment. It is observed from Fig.3 that the spread of topics ranging from average sentiment values of -0.306 to 0.687 indicate a complex user experience landscape. Topics with sentiment scores above 0.05 are classified as positive, while scores below 0.05 are classified as negative; while values between -0.05 and 0.05 are regarded as neutral [53]. To address concerns about the potential instability of fixed sentiment thresholds, a sensitivity analysis was performed using three alternative cutoff specifications: ±0.10, ±0.05, and ±0.02. We assessed the agreement across classifications using Spearman’s rank correlation and Cohen’s kappa. The results indicate high stability across threshold specifications. Spearman’s correlation coefficients ranged from 0.872 to 0.882, indicating strong rank-order consistency. Cohen’s kappa values exceeded 0.95 in all pairwise comparisons, reflecting near-perfect agreement in categorical sentiment assignment [54]. The results suggest that the substantive sentiment patterns reported are not sensitive to the specific cutoff chosen [55].

Table 2. Sentiment trend of the Topics (2017-2024)

|

Topics |

Average Topic Sentiment |

2017 |

2018 |

2019 |

2020 |

2021 |

2022 |

2023 |

2024 |

|

App Functionality & Overall Experience |

0.687 |

0.707 |

0.663 |

0.683 |

0.688 |

0.713 |

0.701 |

0.651 |

0.697 |

|

Emergency Financial Support |

0.673 |

0.672 |

0.678 |

0.689 |

0.715 |

0.716 |

0.624 |

0.621 |

0.633 |

|

Interest Rate & Charges |

0.451 |

0.530 |

0.464 |

0.511 |

0.492 |

0.431 |

0.404 |

0.325 |

0.325 |

|

Approval & Disbursement Process |

0.386 |

0.488 |

0.432 |

0.420 |

0.521 |

0.421 |

0.240 |

0.178 |

0.151 |

|

Credit Limit Offered |

0.222 |

0.333 |

0.223 |

0.226 |

0.327 |

0.223 |

0.134 |

0.089 |

0.086 |

|

Customer Support |

0.093 |

0.119 |

0.154 |

0.067 |

0.174 |

0.166 |

0.011 |

-0.042 |

-0.062 |

|

Technical Aspects & Account Ac cessibility |

0.038 |

-0.011 |

0.017 |

0.059 |

0.129 |

0.060 |

0.014 |

-0.001 |

-0.011 |

|

Eligibility Assessment |

-0.048 |

0.015 |

-0.099 |

-0.010 |

0.023 |

-0.035 |

-0.085 |

-0.143 |

-0.170 |

|

Account Management |

-0.065 |

0.034 |

-0.021 |

-0.089 |

-0.077 |

-0.050 |

-0.101 |

-0.153 |

-0.162 |

|

Documentation & Verification |

-0.075 |

-0.075 |

-0.075 |

-0.111 |

0.037 |

-0.060 |

-0.105 |

-0.137 |

-0.153 |

|

Debt Collection Practices |

-0.214 |

-0.110 |

-0.159 |

-0.159 |

-0.239 |

-0.211 |

-0.272 |

-0.345 |

-0.346 |

|

App Trustworthiness |

-0.306 |

-0.346 |

-0.374 |

-0.326 |

-0.194 |

-0.250 |

-0.317 |

-0.337 |

-0.343 |

|

Average Sentiment |

0.153 |

0.196 |

0.159 |

0.163 |

0.216 |

0.177 |

0.104 |

0.059 |

0.054 |

On the positive side, the topics that are discussed more frequently include emergency financial support (10.91%), and app functionality and overall experience (10.38%). While topics related to approval and disbursement process (8.68%), and interest rate and charges (7.90%) are discussed moderately, the topic customer support (9.36%) is frequently discussed and maintains proximity to neutral sentiment. The topic related to credit limit offered (6.17%) is less discussed. On the negative side, topics surrounding documentation and verification (9.07%), eligibility assessment (8.05%), and account management (6.90%) are moderately discussed but they are close to neutral sentiment. The topic related to app trustworthiness (8.49) is moderately discussed while debt collection practices topic (6.72) is less discussed. Lastly, the topic concerning technical aspects and account accessibility (7.37%), although moderately discussed, maintains a neutral sentiment.

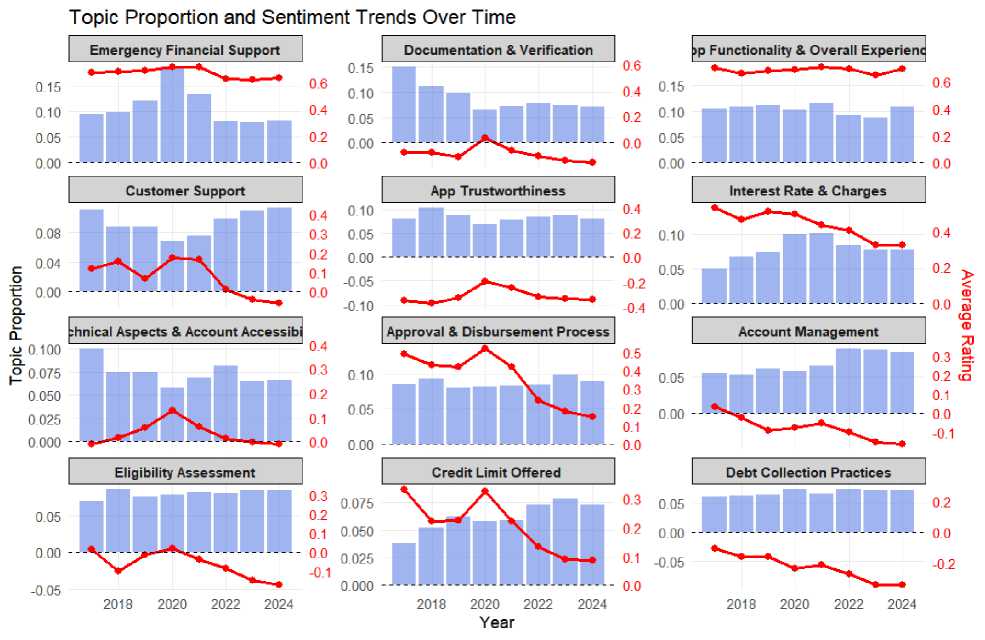

While Fig.3 provided a snapshot of the overall topic landscape, it is crucial to understand how these topics and their associated sentiment have evolved over time. Therefore, we analysed the trends in topic sentiment from 2017 to 2024 and the resutls are presented in Table 2. To better understand the sentiment trend of each topic, Fig.4 presents a visualziation with vertical bars representing proportion value and the line graph representing the sentiment associated with the topic.

Sentiment Distribution of Topics

I I I

। Custorper Support

App Trustworthiness

Emergency Financial Support

Eligibility Astessrtient I

I I

_ III

Account Manager^ent

Debt Collection Practices

-0.25

=More Negative

o.oo

Average Sentiment Score

Credit Limit Offered

0.25

0.50

More Positive:

Fig. 3. Topic Prevalence and Sentiment Distribution

Fig. 4. Topic proportions and sentiment trend 2017-2024

5. Discussion

The current study investigated user satisfaction with AI-enabled instant loan platforms in India, from 2017 to 2024. Overall user sentiment remains positive but has declined significantly from 0.196 in 2017 to 0.054 in 2024, reflecting a 72% drop over seven years. Although the average sentiment score is 0.153, it indicates growing discontent among users. Key areas such as app functionality (0.687) and emergency financial support (0.673) maintained relatively positive sentiment throughout the period, aligning with previous research that highlights the importance of technological convenience [17,56,57]. In contrast, the approval and disbursement process saw a decrease in sentiment from 0.488 to 0.151, indicating user concerns about processing efficiency despite improvements in AI technology. These concerns may stem from stricter regulatory requirements and evolving risk assessment models [1,4,58].

The sentiment surrounding customer support severely declined from positive (0.119 in 2017) to negative (-0.062 in 2024), reflecting dissatisfaction with automated support systems such as chatbots and email responses in dealing with complex financial inquiries [58]. Users frequently reported difficulties in resolving account-related issues through customer support, exacerbating dissatisfaction. This aligns with earlier studies which identified service encounter failures, particularly, inadequate employee responses to borrowers’ queries, as critical determinants of customer dissatisfaction in FinTech lending platforms [3]. Sentiment regarding documentation and verification consistently remained negative, declining from -0.075 in 2017 to -0.153 in 2024, suggesting its association with user frustrations around e-KYC and outsourced verification processes [17,59]. Users, while acknowledging the convenience of instant loans, expressed concerns over high-interest rates, which saw a sentiment drop from 0.530 to 0.325, suggesting a rise in hidden fees and predatory pricing. These results support the findings that negative impact of loan cost structures on FinTech platform adoption [6]. Issues with loan account management were reflected in a decline from a slight positive score of 0.034 in 2017 to a negative score of -0.162 in 2024, with operational inefficiencies leading to dissatisfaction over EMI tracking and credit score updates. This decline poses risks to users’ access to future credit [60,61].

Lastly, negative sentiment around debt collection practices and app trustworthiness persisted, with trust issues amplified by reports of aggressive recovery practices and privacy concerns ( - 0.343 by 2024). These findings underscore the importance of addressing user concerns to restore confidence in the FinTech lending ecosystem [12,17].

5.1. Theoretical Implications

5.2. Practical Implications

6. Conclusions

This study contributes to the existing literature on user experiences with AI-enabled FinTech lending platforms by taking a longitudinal approach to uncover temporal shifts in sentiment on topics that influence user satisfaction. This approach reveals the dynamic nature of user experiences over time, extending beyond static evaluations. Furthermore, our study extends the current literature by critically examining the role of AI in powering the core functional aspects of instant loan platforms. Earlier studies have investigated AI’s role in financial services, primarily focusing on credit risk assessment, loan default predictions, and the overall impact on the lending process [1,62,63,64]. Unlike these studies, which are centered on the lender’s perspective, our research prioritizes the user's viewpoint, exploring how AI-enabled features and functionalities are associated with their satisfaction and experience. Through this new perspective, our study offers a clearer understanding of how AI enabled processes are associated with user experience and satisfaction toward instant loan platforms in the context of approval and disbursement, eligibility assessment, loan account management, and customer support.

Our findings not only provide descriptive insights but also fit within established theoretical frameworks that explain user satisfaction and trust in digital services. Firstly, the results support Expectation–Confirmation Theory (ECT), which suggests that satisfaction is influenced by whether prior expectations are confirmed or disconfirmed [22,23]. Positive sentiment regarding app usability and emergency financial support indicates that user expectations for convenience and accessibility were met or exceeded. In contrast, declining sentiment in areas such as customer support, documentation, and eligibility assessment reflects unmet expectations of responsiveness and fairness, leading to dissatisfaction despite the technological efficiency of the platforms. Secondly, our findings align with Trust Theory, which highlights the importance of perceived integrity, competence, and benevolence in fostering user confidence in digital financial services [24,26]. Persistent negative sentiment towards debt collection practices and app trustworthiness indicates a decline in trust when users encounter aggressive recovery tactics or privacy concerns. These trust issues can hinder adoption and satisfaction, even when the platforms' functional aspects are robust.

By integrating these frameworks, our study builds on previous FinTech research that has primarily emphasized functional efficiency and risk assessment [1,65]. We illustrate that user satisfaction with AI-enabled lending platforms is not merely about speed or convenience; it is significantly shaped by the management of expectations and the establishment of trust. This theoretical foundation highlights the necessity of balancing technological innovation with transparent communication, ethical practices, and user-centric design to maintain long-term confidence in FinTech lending platforms.

Our analysis indicates that users generally have a positive sentiment toward instant lending platforms in key functional areas. However, the longitudinal analysis reveals a declining trend in positive sentiment. To address this, platforms should prioritize process efficiency by improving AI enabled processes for quicker approvals and disbursements. Additionally, it is crucial for platforms to continuously audit their credit assessment algorithms to establish unbiased eligibility criteria [66]. User complaints regarding unfair rejections and high interest rates indicate that AI-enabled pricing and credit assessments should be fair and justifiable, mitigating bias and discrimination concerns [67].

Our findings highlight significantly negative sentiment concerning trustworthiness and debt collection practices. Instant loan platforms should proactively address these trust issues by enhancing their debt collection methods and implementing transparent policies. Furthermore, our analysis indicates that customer support services are falling short of user expectations, likely due to reliance on chatbots and automated systems. FinTech platforms should adopt a hybrid customer support model incorporating human agents to handle complex inquiries, ensuring that customers receive thorough assistance when automated solutions prove inadequate [68,69]. The temporal mapping of topics and sentiments enables platform managers to identify not only the significance of various issues but also the timing of fluctuations in specific concerns, thereby providing a more dynamic evidence base for targeted intervention.

AI-enabled lending services have evolved significantly, utilizing alternative credit scoring and automated risk assessments to improve financial inclusion and expedite loan approvals. Users now experience faster and more accessible credit services, fostering positive sentiment toward instant loan platforms. However, despite these advancements, our findings reveal a decline in user satisfaction in recent years, even as AI adoption deepens across various functions of lending. While AI-enabled processes like eligibility assessment, loan approval, and account management continue to generate positive sentiment, their diminishing impact on overall satisfaction raises important questions. This decline may be attributed to unmet expectations, a reduced novelty effect, or inconsistencies in AI implementation, which challenge the platforms' claims of unbiased, efficient, and frictionless lending. Moreover, persistent concerns about debt collection practices highlight the need for improved regulatory oversight and ethical business practices, even though our data do not indicate a specific role for AI.

Finally, while AI is widely used to support core functionalities of instant lending platforms, sustaining long‑term user satisfaction will depend on improving transparency, fairness, and responsible debt management across these services. Addressing these challenges will be crucial in ensuring that AI-enabled lending remains both efficient and trustworthy, fostering greater confidence among users in the years to come.

7. Limitations and Future Work

This study provides insights into user experiences with AI-enabled instant loan platforms, but it has several limitations. First, AI adoption and performance were not directly measured at the app level. References to "AI" refer to the broader AI-enabled environment rather than specific AI components. The non-experimental design prevents causal inferences about AI or particular platform features, making it impossible to separate AI-specific effects from general digital or organizational factors. Future research should include explicit indicators of AI functionalities. Second, the review data have their own limitations. Online reviews often suffer from selection bias, typically over-representing users with very positive or very negative experiences while under-representing silent or moderately satisfied customers. Consequently, the overall sentiment may be skewed, and our findings should be viewed as reflecting the opinions of active reviewers rather than the entire customer base. Future studies could combine large-scale review analytics with surveys, behavioural data, and multilingual corpora to achieve a more representative and diverse understanding of user sentiment. Third, methodological choices impose constraints. We used VADER for sentiment analysis, which may not effectively handle sarcasm, regulatory complaints, or specialized financial terminology. Future research could compare lexicon-based methods with supervised or transformer-based sentiment models (e.g., BERT or FinBERT) specifically designed for financial text and utilize Structural Topic Models (STM) to enhance topic analysis. Topic labels were derived from human interpretation of LDA outputs, and while expert judgment was applied, a more formal assessment of inter-rater reliability would strengthen validation. Finally, to characterize the overall ecosystem rather than focus on specific providers, we aggregated reviews from ten popular and high-download apps into a single corpus. This approach enhances coverage and statistical power but limits the ability to analyze app-level business practices, regulatory compliance, or variations in AI maturity. Future research should supplement this ecosystem-level perspective with app-specific or provider-level studies to capture such heterogeneity.

Author Contributions Statement

Arivazagan Jayabalan – Conceptualization, Methodology, and Supervision: Proposed research ideas, Constructed the overall framework, and supervised project execution.

Shahrukh Saleem – Data Curation and Software Implementation: Handled data acquisition, dataset preprocessing, and implementing the research model.

Prem Kumar – Model Training, Validation, and Performance Evaluation: Led the model training process, validated results using standard metrics, and benchmarked performance against existing methods.

Sudalaimuthu Shanmugam – Formal Analysis, Visualization, and Statistical Analysis: Performed in-depth analysis of experimental results, prepared performance charts, and ensured the statistical robustness of the evaluation.

All authors have read and agreed to the published version of the manuscript.

Conflict of Interest Statement

The authors declare no conflicts of interest.

Funding Declaration

This research did not receive any external funding.

Data Availability Statement

The datasets of the current study are available from the corresponding author on reasonable request

Ethical Declarations

The study was conducted using secondary data. Hence, there was no requirement for ethical approvals for the present study.

Acknowledgments

We sincerely thank the experts for their professional evaluation and valuable recommendations, which have contributed to improving the quality of the experiment and the reliability of its results.

Declaration of Generative AI in Scholarly Writing

The authors acknowledge the use of AI-based tools for minor language editing or grammatical correction. However, all intellectual contributions, analyses, and conclusions presented in this article are entirely the authors’ own.

Abbreviations

The following abbreviations are used in this manuscript:

AI - Artificial Intelligence

NLP - Natural Language Processing

P2P – Peer-to-Peer

ECT – Expectation Confirmation Theory

LDA – Latent Dirichlet Allocation

VADER - Valence Aware and sEntiment Reasoner e-KYC – Electronic Know Your Customer

BERT – Bidirectional Encoder Representations from Transformers

Appendix

There are no Appendices to this article