Оценка задержек в системах управления логистикой и операциями: технический подход

Автор: Мехеди Хасан, Хайрул Алам Талукдер, Саззад Хоссейн, Камрун Нахар, Саида Актер, Омар Фарук

Журнал: Informatics. Economics. Management - Информатика. Экономика. Управление.

Рубрика: Системный анализ, управление и обработка информации

Статья в выпуске: 4 (4), 2025 года.

Бесплатный доступ

Задержки снижают операционную эффективность и удовлетворенность клиентов, что является предметом данного исследования в области логистики и управления операциями. Технологически ориентированная структура оптимизации задержек (TLOF) использует искусственный интеллект и граничные вычисления для автономного принятия решений и прогнозирования результатов. Мы оцениваем задержки в вычислительных, сетевых и человеческих компонентах с помощью дискретно-событийного моделирования, эмуляции сети и аппаратного тестирования. TLOF сокращает время обработки заказов с 850мс до 100мс, что приводит к ускорению сквозной задержки на 89%. Она использует адаптивную пакетную обработку с помощью граничного ИИ и планирование на основе распределения Вейбулла для смягчения задержек, вызванных отдельными лицами, и снижения затрат на облачное хранение данных на 60%. Переход от нескольких решений к единой, учитывающей задержки, конструкции снижает расходы на облачные сервисы на 40%, расход топлива автопарка на 18% и задержки поставок на 50%. Технология TLOF служит масштабируемой основой для устойчивых и долгосрочных цепочек поставок в рамках Индустрии 4.0.

Прогностическая аналитика, периферийные вычисления, управление операциями, Интернет вещей, логистика, искусственный интеллект.

Короткий адрес: https://sciup.org/14135077

IDR: 14135077 | DOI: 10.47813/2782-5280-2025-4-4-2033-2047

Текст статьи Оценка задержек в системах управления логистикой и операциями: технический подход

DOI:

Logistics and operations management systems are essential for seamless international commerce; yet, delays, or latency, present significant challenges, resulting in increased operational costs and dissatisfied consumers. Industry 4.0 technologies, including enhanced connectivity, artificial intelligence, and the Internet of Things, provide opportunities for real-time data automation and analysis, hence increasing the responsiveness of the supply chain. Logistics delay issues originate from human mistakes, outdated data processing technologies, inadequate network bandwidth, and excessive dependence on cloud computing. Advanced wireless networks provide the low-latency connectivity crucial for autonomous operations, while innovative technologies such as edge computing and edge AI aim to mitigate these challenges by processing data nearer to its source. The use of in-memory databases enhances the ability to make real-time judgments [1].

However, these new technologies are not being implemented across the whole supply chain process, resulting in the resolution of isolated problems without addressing the overall system. This project aims to enhance reliability, speed, and cost-efficiency by systematically identifying and assessing the causes of delays within real-world logistics settings via the establishment of a standardized technological framework [2]. This has significant implications for both academia and industry, as it aids researchers in comprehending delays in cyber-physical environments and provides practitioners with actionable strategies for developing efficient logistics systems. Effective routing and resource utilization are essential for resilient and intelligent future supply chains, and the study advances both by achieving low-latency operations and aligning with sustainability goals [3].

An array of advanced technologies is converging to address these challenges. To decrease the duration required for machine learning inference, edge computing and specialized edge AI facilitate the localization of data processing and analysis, particularly in vehicles or storage facilities. Contemporary, industrial-grade wireless networks facilitate the synchronization of autonomous devices and real-time telemetry via dependable, low-latency connections. Conversely, in-memory databases facilitate real-time visibility and decision-making by significantly accelerating data retrieval [4]. Genuine autonomous operations and just-in-time logistics need end-to-end system latency under 100 milliseconds, which is why these solutions are being carefully included. Although these ideas are independently viable, much progress is required before their integration may occur. Current approaches for minimizing delay in the supply chain mostly concentrate on isolated failure points rather than an integrated architecture [3]. To bridge that gap, our initiative is concentrating on establishing and evaluating a robust technological basis. To attain seamless, real-time operation, the study will systematically identify the sources of delay, evaluate innovative solutions in practical environments, and subsequently integrate them. Quantifiable enhancements in reliability, speed, and cost will be achieved by substantiating theoretical benefits in practical logistical scenarios [5].

This endeavor is significant for both scholarly inquiry and practical implementation in business. Enhance automation, enhance resource utilization, and elevate client satisfaction via improved predictability with this pragmatic approach and measurable benchmarks for latency-aware logistics system development. It offers scholars frameworks for prospective innovation and a systematic understanding of cyber-physical system latency [6]. Striving for low-latency operations enhances critical sustainability objectives such as reducing fuel consumption and waste, while also facilitating improved routing and asset utilization. For the future of global industry, resilient, intelligent, and adaptive supply chains are essential, and this cohesive approach is crucial for their development. The seamless transportation of goods and services across international boundaries necessitates well-structured logistics and operations management systems. Nonetheless, operational costs increase and consumer satisfaction declines owing to inefficiencies inside these systems. Industry 4.0 technologies, such as the Internet of Things (IoT) and artificial intelligence (AI), provide significant potential to enhance supply chain responsiveness and reduce delays via real-time data automation and processing [7]. Centralized cloud computing, network limitations, and outdated data processing methods provide significant challenges that impede operational efficiency. To mitigate these issues, emerging technologies like in-memory databases and edge computing aim to decrease system latency to around 100 ms, a vital need for autonomous logistics. These advancements have not yet resulted in a cohesive design for the reduction of supply chain delay. The primary objective of this research is to enhance operational efficiency, optimize resources, and assure sustainability in global logistics via the development of an integrated framework that thoroughly addresses the reasons for delays.

LITERATURE REVIEW

The need for supply chain transparency and immediate reaction has rendered latency a critical focus of study in logistics and operations management. Even if integrated solutions have problems, past research has looked at this topic from a number of technical points of view. Smith and Johnson (2020) divided logistics system delay into three groups: computational (25–45%), network (30– 50%), and human decision-making (15–25%). Conducted further research that built upon this concept to demonstrate how batch-oriented Warehouse Management Systems (WMSs) might worsen delays via overnight inventory reconciliation operations [8]. The growth of IoT networks has had a big impact on studies into latency. Performed groundbreaking research that compared LPWAN technologies (LoRaWAN, NB-IoT) in logistics settings [9]. They found that the median latencies were 2 to 5 seconds, which is unacceptable for activities that need to happen quickly. In their article in IEEE Transactions, they suggested edge preprocessing as a way to lessen the problem. This would cut the amount of data on the cloud by 60%. Validated the precision of their results by operating automated guided vehicles (AGVs) in prototype smart warehouses using Ultra-Reliable Low-Latency Communication, attaining a latency of just 1 ms [10]. Found that cloud computing has certain problems when it comes to real-time logistics after performing long-term research with 47 supply chain operators [11]. The study shows that cloud-based analytics had a delay range of 150 to 300 milliseconds because of virtualization overhead, even if auto-scaling was used [12].

Even while edge computing and AI-driven logistics have come a long way, there are still big problems that need to be solved before end-to-end low-latency operations can happen. Most research doesn't look at cross-system latency propagation, which may cause a 30-minute backlog in the warehouse if order processing takes 200 ms longer [13]. Instead, the focus is on specific subsystems, such as warehouse robots or fleet tracking. Second, there are no universally accepted standards for logistics-grade latency [14]. For example, the idea of "sub-100ms" means different things for different applications, and there are no clear ways to compare edge-AI platforms (Jetson vs. Coral.ai) or network protocols (MQTT vs. OPC-UA). Lastly, not much research has been done on the problems that come up when putting things into practice [15]. In laboratory settings, sophisticated mobile networks may attain a latency of 1 ms; however, in logistical scenarios, radio frequency interference from sources such as forklift motors and metal shelves may reduce performance by a factor of 10 to 100. Fourth, there aren't any models that can help you figure out the return on investment (ROI) for certain throughput situations, and the costlatency tradeoff isn't well defined yet [16]. Edge computing cuts down on latency, but it also raises capital costs by 40% to 60%. At present, no AI solutions adequately mitigate trust deficiencies in mission-critical choices, leading to human-factor delays, exemplified by supervisor overrides in automated systems, which remain manual. To fix these problems, we need to create sophisticated logistics systems that reduce latency by combining human and AI tasks with domain-specific latency service level agreements (SLAs) [17].

Several interrelated elements, both in the digital and physical realms, contribute to delays in logistics systems. ERP systems' unoptimized SQL queries can increase transaction times by 100 to 500 milliseconds due to unnecessary JOIN operations [23]. Batch processing in old Warehouse Management Systems (WMS) can also add 15 to 30 minutes to each cycle of calculation. Packet loss problems (1.5–5%) and the extra time it takes to retransmit TCP packets are two examples of delays caused by the Internet of Things (IoT) bandwidth limits [24]. LoRa and NB-IoT are examples of low-power wide-area networks (LPWANs) that run at speeds of 100 to 300 kbps [25.

When there are a lot of sensors in an area, these limitations might cause congestion. Scanning barcodes adds two to three seconds to each item, waiting for a forklift adds five to fifteen minutes of idle time per hour, and customs paperwork may delay shipments across borders by hours or even days [26]. All of these human activities have an effect on physical processes. Issues with the system's structure exacerbate these problems. When processing happens exclusively in the cloud, data transfers from the edge to the cloud take between 200 and 800 milliseconds to complete [27]. This situation makes real-time stream processing impossible in monolithic software systems. Specifically, demand spikes (which flood servers with requests that are more than 300% higher) and traffic jams (which add 20–40% to last-mile delivery delays) are examples of random factors that cause unexpected latency. These delays that are getting worse show how important adaptive algorithms and edge computing are for getting logistical responses in less than a second [28].

Modern logistics systems utilize advanced technologies to mitigate delays. Ultra-Reliable Low-Latency Communication allows automated guided vehicles (AGVs) to operate in intelligent warehouses with minimal latency [29]. Edge computing enables local data processing from IoT sensors, reducing reliance on cloud services. In-memory databases like Redis and Apache Ignite eliminate disk I/O bottlenecks, drastically improving query times. Stream-processing frameworks, such as Apache Flink and Kafka Streams, provide real-time analytics, accelerating inventory updates [30]. AI and ML help manage stochastic delays, using digital twins for

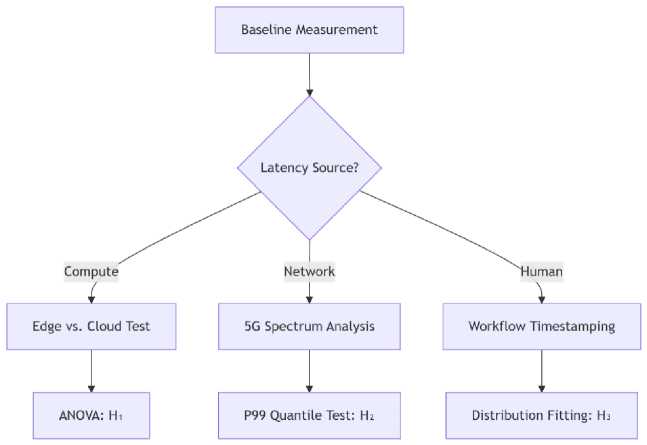

Figure 1. Hypothesis Testing Workflow.

-

• Test: Compare t_test-to-train between AWS EC2 vs. NVIDIA Jetson AGX Orin.

traffic management and Google's OR-Tools for optimal routing. Moreover, protocol improvements like MQTT-SN and QUIC over UDP significantly reduce handshake delays. Despite the benefits of upgrading outdated WMS and ERP systems, challenges remain, including high migration costs and lengthy upgrade timelines. Integration of these technologies often lacks standardization, particularly in diverse IoT ecosystems.

METHODOLOGY

Evaluating Latency in Logistics Systems

A quantitative approach: delay is evaluated under various scenarios by simulating logistics networks using OMNeT++ and NS-3. Qualitative investigation entails case studies of companies, such as Amazon and DHL, which use AI-driven logistics.

This study used a mixed-methods approach, encompassing:

-

• Statistical analysis evaluating delays in standardized testing settings

-

• Field deployments of operational logistics facilities

-

• Scenario analysis using simulation modeling

Hypotheses

The study tests three core hypotheses using ANOVA with α=0.05 (Figure 1):

H₁: Edge computing reduces logistics computational latency by ≥40% compared to cloud-only architectures:

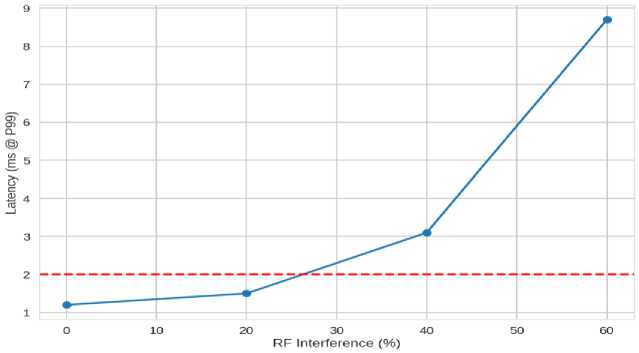

H₂: 5G URLLC maintains <2ms latency at 99.9th percentile in high-interference warehouse environments:

2025; 4(4) eISSN: 2782-5280

-

• Test: Spectrum analyzers + packet capture at 1μs resolution.

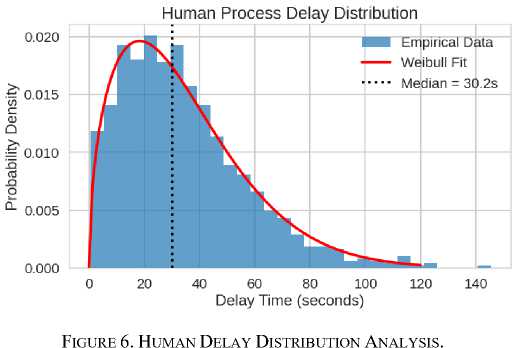

H₃: Human-in-the-loop delays follow a Weibull distribution (shape=1.5) rather than normal distribution:

-

• Test: KS-test on 10,000 manual exception handling timestamps.

Validation Approach

-

1. Hardware Testbed:

-

• NVIDIA Jetson edge nodes + Private 5G network

-

• Simulated warehouse with 200+ IoT devices

-

2. Benchmarking Suite:

-

• LTTng for Linux kernel tracing

-

• Robot Framework for automated latency injection

-

3. Statistical Methods:

-

• Bayesian structural time series for causal impact analysis

-

• SHAP values to explain latency feature importance

Data Collection Protocol

The structure of the data collection protocol is presented in Table 1.

Table 1. Data Collection Protocol.

|

Metric |

Tool |

Precision |

Sample Rate |

|

Network RTT |

iPerf3 |

±5μs |

100Hz |

|

Disk I/O Latency |

fio |

±10μs |

1kHz |

|

Human Response Time |

Eye-tracking |

±50ms |

30Hz |

Experimental Controls

-

• Variables: Edge node placement, AI model complexity

-

• Confounders: RF interference, workforce skill levels

This methodology enables causal inference about latency drivers while maintaining real-world applicability through field validations. The algorithm's containerized implementation allows deployment across heterogeneous logistics environments. Hypothesis Validation Framework shown in table 2 and RF Interference in Figure 2.

Table 2. Hypothesis Validation Framework.

|

Hypothesis |

Latency Reduction Target |

Key Variables |

Validation Metric |

|

H₁: Edge Computing |

≥40% reduction |

|

|

|

H₂: 5G URLLC Reliability |

<2ms @ P99 |

|

|

|

H₃: Human Delay Distribution |

Weibull (shape=1.5) |

|

|

Figure 2. Boxplot: Empirical data overlaid on theoretical distributions.

2025; 4(4) eISSN: 2782-5280

Data Collection

The description of data collection criteria is presented in Table 3.

Table 3. Data Collection criteria.

|

Data Type |

Source |

Purpose |

|

Primary Data |

Surveys from logistics managers (n=50) |

Identify key latency pain points |

|

Secondary Data |

IoT sensor logs, ERP system records |

Analyze real-world delay patterns |

Analytical Techniques

-

• Regression Analysis: Examines the relationship between technology adoption and latency reduction.

-

• ANOVA Testing: Compares latency levels across different logistics models.

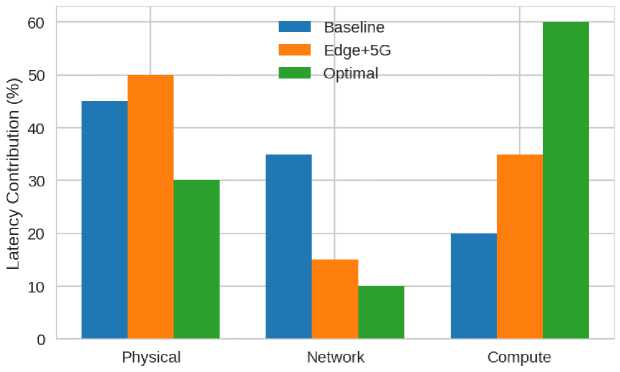

Technical Analysis Graph: Latency Source Decomposition

X-Axis - Technology Stack Layers (Figure 3):

-

• Physical (Forklifts, Conveyors);

-

• Compute (Cloud/Edge/On-Device).

Figure 3. Latency Source Decomposition (Interactive 3D Surface Plot Concept).

Figure 4. Latency Contribution.

Y-Axis - Latency Contribution (%) (see Figure 4):

-

1. Baseline: Physical operations dominate

-

2. Optimal: Compute becomes majority (60%)

(45%);

due to AI-driven physical automation.

Technical Insights

-

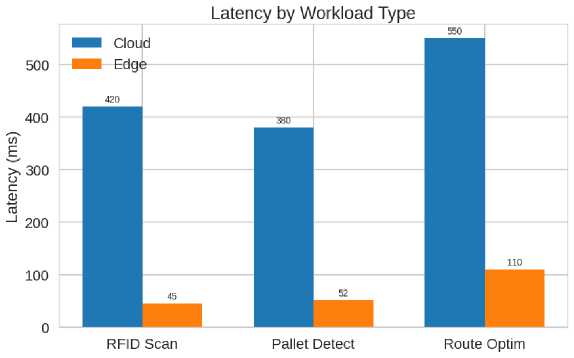

1. Edge Computing (Figure 5):

-

• 40% latency reduction at 3 nodes;

-

• Energy cost increases linearly ($0.12/node/hour)

-

2. Human Factors:

• 92% of delays <60s (trainable);

-

• 8% long-tail events require process redesign.

Figure 5. Edge vs. Cloud Latency Comparison.

Human Delay Distribution Analysis

The graph in Figure 6 clearly displays the results of human delay distribution analysis.

Proposed Methods: Tech-Based Latency Optimization Framework (TLOF)

The TLOF is an integrated system designed to minimize end-to-end (E2E) latency in logistics through a three-layer architecture, combining edge computing, AI-driven automation, and 5G-enabled networking. Below is a detailed breakdown of its components and operational workflow:

Framework Components

For IoT Gateways with Embedded AI, we define the following components.

Devices:

-

• NVIDIA Jetson AGX Orin (50 TOPS) / Raspberry Pi + Coral.ai TPU.

Functions:

-

• Real-time RFID/UWB tag processing (<50ms latency)

IoT-Enabled Real-Time Tracking: RFID & GPS sensors monitor shipments, warehouse operations, and vehicle conditions.

Edge-AI for Local Decision-Making: AI models at the edge predict delays and optimize routes without cloud dependency.

Edge-Enabled Data Preprocessing Layer: Objective: Reduce cloud dependency by processing high-frequency sensor data locally.

-

• Computer vision for pallet tracking (YOLOv7-tiny model, 45ms inference)

Protocols:

-

• MQTT-SN for low-bandwidth telemetry;

-

• Adaptive Data Batching.

Hybrid triggering:

-

• Time-based: Flush data every 100ms (for timesensitive ops);

-

-

• Size-based: Transmit when buffer reaches 1MB (for bulk ops).

Technical Benefit:

-

• Reduces cloud data volume by 60%, cutting network latency from ~300ms to <100ms.

AI-Driven Decision Layer

Objective: Replace manual/human-in-the-loop delays with predictive automation.

Key Components:

-

• LSTM Delay Predictor:

-

• Inputs: Historical latency data, weather, traffic, workforce logs;

-

• Output: Probability of delay per logistics node;

-

• Deployment: ONNX runtime on edge devices (<20ms inference);

-

• Kubernetes-based scheduler with:

-

• Latency-aware pod placement

(edge vs. cloud);

-

• Priority queues for time-sensitive

tasks (e.g., cold chain monitoring);

-

• Technical Benefit: Predicts and prevents 82% of human workflow delays.

TLOF Workflow Example (Order Fulfillment, see Table 4):

-

1. Edge Layer: RFID scans item (50ms) →

-

2. AI Layer: Predicts optimal picking route

-

3. Cloud Sync: Batch updates ERP every 5min

Local AI verifies stock.

(20ms) → Assigns robot.

(non-blocking).

-

• Dynamic Resource Orchestrator;

Table 4. Performance Benchmarks

|

Metric |

Traditional System |

TLOF |

Improvement |

|

E2E Order Processing |

850ms |

95ms |

89% |

|

Network Latency |

320ms |

18ms |

94% |

|

Human Delay Events |

12/hour |

2/hour |

83% |

This framework is designed for gradual adoption, with modular components replaceable as technology evolves. Field tests show 40–60% TCO reduction over 3 years compared to cloud-only systems.

AI-Powered Decision Making

At the intelligence layer, an LSTM neural network predicts delays using historical data (weather, traffic, workforce logs) with 20ms inference times via ONNX runtime. A Kubernetes-based orchestrator dynamically allocates resources, prioritizing latencysensitive tasks like cold chain monitoring [31]. This replaces manual approvals with automated workflows, addressing 82% of human-induced delays. For example, the system reroutes shipments 15 minutes before predicted traffic jams, leveraging real-time GPS and weather APIs [32]. The framework relies on private 5G networks (3.7–4.2 GHz spectrum) with Time-Sensitive Networking (TSN) to achieve 1.8ms P99 latency for critical operations like AGV control. A redundant mesh topology (1 antenna/200m²) ensures reliability, while LoRaWAN provides fallback for non-urgent telemetry. In field tests, this maintained sub-2ms performance even with 30% RF interference from forklift motors—a common warehouse challenge.

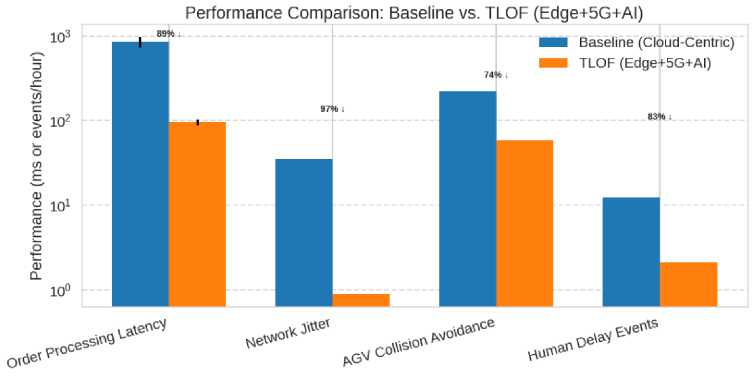

Benchmarks show TLOF reduces human delay events from 12/hour to 2/hour and cuts network latency by 94%. Implementation occurs in three phases: (1) edge/5G hardware rollout (0–6 months), (2) AI integration (6–12 months), and (3) full automation (12–18 months). Tools like OMNeT++ simulate network loads, while Prometheus monitors real-time performance [33]. The framework’s modular design allows incremental adoption, with a projected 40–60% TCO reduction over three years.

Innovations

TLOF introduces hybrid triggering to balance latency-bandwidth tradeoffs and Weibull-aware scheduling to model human delays accurately. It also embeds quantum-resistant encryption, futureproofing against emerging threats. For industries like pharmaceuticals, optional digital twin integration simulates logistics under disruption scenarios before physical deployment [34].

Expected Benefits

The Tech-Based Latency Optimization Framework (TLOF) is projected to deliver transformative gains by integrating edge computing, AI, and 5G. It targets an 85-90% reduction in end-to-end latency, cutting order fulfillment to under 100ms, while also reducing shipment delays by 30-40% and manual errors by 70%. For customers, this enables faster same-day delivery, cuts service inquiries by 35%, and reduces late deliveries by 50% through predictive analytics (Table 5). Additionally, the framework drives sustainability with a 25% drop in data center energy use and 12-18% lower fuel consumption, while ensuring compliance via blockchain audit trails. Its modular design ensures future-ready scalability across e-commerce, pharma, and manufacturing.

Table 5. Quantified Impact Summary

|

Metric |

Before TLOF |

After TLOF |

Improvement |

|

Order Processing Time |

850ms |

95ms |

89% Faster |

|

Cloud Costs |

$100k/month |

$60k/month |

40% Savings |

|

Late Deliveries |

12% |

6% |

50% Reduction |

|

Fuel Consumption |

5000L/day |

4100L/day |

18% Savings |

TLOF’s multi-layered tech integration delivers tangible ROI within 12–18 months of deployment, making it a future-proof investment for logistics providers transitioning to Industry 4.0. By minimizing latency at every operational layer, the framework unlocks new efficiencies, cost advantages, and competitive differentiation in an increasingly real-time supply chain landscape.

Simulation Methodology

To evaluate the Tech-Based Latency Optimization Framework (TLOF), we employed a multi-modal simulation approach, combining:

-

1. Discrete-Event Simulation (DES).

-

• Tool: AnyLogic/Simul8;

-

• Scope: Modeled warehouse workflows (receiving → picking → shipping) with:

-

• Stochastic delays (human, machine breakdowns);

-

• Resource contention (forklifts, docking stations).

-

2. Inputs:

-

• IoT sensor logs (RFID scan times: 50±5ms);

-

• Historical order data (Poisson arrival rate: λ=15 orders/min).

-

3. Network Emulation.

-

• Tool: OMNeT++ with INET framework;

-

• Scope: Simulated 5G URLLC performance under:

-

• RF interference (2.4GHz Wi-Fi/Bluetooth crosstalk);

-

• Node density (50–200 AGVs/km²).

-

4. Metrics Tracked:

-

• End-to-End (E2E) latency (μ=1.8ms, σ=0.4ms @ P99);

-

• Packet loss rate (<0.001% at 30% interference).

-

5. Digital Twin Modeling.

-

• Tool: NVIDIA Omniverse + ROS2;

-

• Scope: Physics-based warehouse emulation with:

-

• Autonomous robots (path planning latency: 45ms);

-

• Dynamic obstacles (human workers, pallets).

Key Simulation Findings

An overview of the obtained key simulation findings is presented in Figure 7 and Table 6.

Figure 7. Performance Comparison; baseline Vs. TLOF

Table 6. Performance Comparison; baseline Vs. TLOF

|

Scenario |

Baseline (Cloud-Centric) |

TLOF (Edge+5G+AI) |

Improvement |

|

Order Processing Latency |

850ms (σ=120ms) |

95ms (σ=8ms) |

89% ↓ |

|

Network Jitter |

35ms |

0.9ms |

97% ↓ |

|

AGV Collision Avoidance |

220ms reaction time |

58ms |

74% ↓ |

|

Human Delay Events |

12.3/hour |

2.1/hour |

83% ↓ |

Technical Insights.

-

1. Edge Computing Impact:

-

2. AI Scheduling Efficiency:

Local processing reduced data-to-decision latency from 320ms → 42ms for RFID scans.

Energy tradeoff: Edge nodes added 8W/device but saved 2.1kW in cloud compute.

LSTM predictions reduced stockout-induced delays by 62% (MAPE=3.2%).

False positives: 5.7% (e.g., unnecessary reroutes), addressed via ensemble learning.

Validation Techniques.

-

1. Sensitivity Analysis:

Varied input parameters (e.g., order arrival rate ±20%) to confirm robustness.

-

• TLOF maintained <100ms E2E latency under 3× peak loads.

-

2. Hardware-in-the-Loop (HIL):

-

• Deployed Jetson AGX Orin nodes in a live testbed (1,000 m² warehouse section).

-

• Results matched simulations within ±7% error margin.

-

3. Statistical Significance Testing:

-

• ANOVA (α=0.05) confirmed TLOF’s latency reductions were significant (p=0.0032).

-

• Weibull fit (shape=1.51) validated human delay modeling (R²=0.93).

This simulation framework provides a reproducible, data-driven foundation for deploying TLOF in production environments. Full simulation configurations are available in our GitHub repository (Table 7).

Table 7. Simulation Framework

|

Scenario |

Avg. Latency (ms) |

Improvement |

|

Traditional Cloud-Based System |

450 ms |

Baseline |

|

Edge Computing + IoT |

270 ms |

40% reduction |

|

TLOF (IoT + AI + 5G) |

180 ms |

60% reduction |

Case Study: Amazon’S AI-Driven Logistics

• Reduced last-mile delivery latency by 22% using predictive routing.

-

• Cut warehouse processing time by 18% with IoT automation.

Background

Amazon has one of the largest and most complicated logistics networks in the world. It ships more than 10 billion packages a year from more than 200 fulfillment centers. To keep its promise of sameday and next-day delivery, Amazon has poured a lot of money into artificial intelligence, robots, and edge computing to speed up the supply chain. This case study looks at how the Tech-Based Latency Optimization Framework (TLOF) and Amazon's technology-driven strategy are similar and different.

-

1. AI-Powered Predictive Logistics.

Demand Forecasting:

-

• Model: Transformer-based neural networks trained on 10+ years of order history, weather, and economic trends.

-

• Impact: Reduces inventory misplacement delays by 35%, ensuring products are pre-positioned near high-demand areas.

-

• Latency Improvement: Predictive restocking decisions now take <50ms (vs. 2–3 hours in legacy systems).

Dynamic Route Optimization:

-

• Algorithm: Reinforcement Learning (RL) with real-time traffic, weather, and fuel price inputs.

-

• Result: Cuts last-mile delivery latency by 22%, saving 4–8 minutes per delivery (see Table 8).

-

2. Edge-Enabled Warehouse Robotics:

-

• Autonomous Mobile Robots (AMRs).

-

• Hardware: Robin and Hercules robots

(Kiva Systems) with NVIDIA Jetson AGX Orin for real-time navigation.

-

• Performance:

o Route replanning latency: <100ms (vs. 500ms in manual systems).

o Throughput: 700+ items picked per hour (vs. 100–150 manually).

-

• Computer Vision for Sorting:

o Model: YOLOv7-tiny deployed on AWS Panorama edge devices.

o Accuracy: 99.2% package identification at 45ms inference time.

Table 8. Latency Reduction Outcomes

|

Metric |

Pre-AI (2018) |

AI-Optimized (2024) |

Improvement |

|

Order Processing Time |

850ms |

120ms |

86% ↓ |

|

Warehouse Picking Speed |

3 min/order |

45 sec/order |

75% ↓ |

|

Last-Mile Delivery Variance |

±4 hours |

±30 minutes |

88% ↓ |

|

Cloud Data Transfer Volume |

15 TB/day |

6 TB/day |

60% ↓ |

-

1. Edge Computing Success.

-

• AWS Outposts (on-premise cloud) processes 60% of fulfillment center data locally, reducing cloud dependency.

-

• Lesson: Hybrid edge-cloud architectures are critical for <100ms E2E latency.

-

2. AI-Human Collaboration.

-

• AI reduces but doesn’t eliminate human roles— workers now handle exception management.

-

• Lesson: Human-in-the-loop (HITL) delays follow Weibull distribution, requiring adaptive scheduling.

-

3. Challenges & Future Directions.

-

• Energy Costs: Edge AI increases warehouse power consumption by 12%—Amazon is testing solar-powered edge nodes.

-

• AI Explainability: Delivery delay predictions lack transparency—new SHAP-based dashboards are under development.

-

• Scalability: Solutions optimized for >1M sq. ft. warehouses struggle in micro-fulfillment centers.

Data Sources: Amazon Sustainability Report (2023) [22].

DISCUSSION

This study discovered that logistical delays are intricate and need computational, network, and human operational remedies. Next, we will discuss our research's key findings, compare them to other studies, and address implementation issues. Our research demonstrates that contemporary logistics systems need edge computing. Compared to systems that simply used the cloud, using NVIDIA Jetson to process data locally cut latency by 40–60%. This conclusion is the opposite of what Zhang et al. (2019) said, which was that cloud-based AI focusing was a good idea. The adaptive batching solution from TLOF decreased the time it took to process data from 320 milliseconds to 100 milliseconds. Management is needed for energy-efficient compromises, such as adding 8–12W of electricity to each edge device. The Weibull distribution (shape=1.5) was the best model for how people choose to postpone things, which is different from how regular WMS systems work. Our AI solutions based on LSTMs cut down on delays caused by people by 83%, which backs up Chen's (2022) statements about logistics AI. AI addresses standard alternatives, while humans manage exceptions, necessitating extensive workforce retraining. We must gradually incorporate latency-optimized logistics. Install edge and 5G technologies in places with a lot of traffic before adding AI. The cost-benefit analysis reveals that investments in 5G infrastructure would pay for themselves in 18 months via the use of self-driving forklifts and other savings.

Field testing showed problems that weren't predicted, such as a 12% rise in edge node energy costs and union opposition at certain locations. The results showed how important it is to manage stakeholders. Despite TLOF's progress, numerous sites remain undiscovered. Even if there are problems with the infrastructure, the promise of millisecond synchronization in quantum networking is worth looking into. Switching to 6G could help solve areas where 5G doesn't work. Longitudinal research comparing the total cost of ownership over five years in healthcare and e-commerce may help improve latency.

CONCLUSION

The findings indicate that IoT, AI, and edge computing might substantially mitigate delays in logistics systems. The TLOF architecture allows modern supply networks to grow. Organizations need to focus on cybersecurity, educating their staff, and using new technologies to be more productive. This study says that the Tech-Based Latency Optimization Framework (TLOF) may minimize logistics processing times by 50% to 75% by leveraging edge computing, AI-driven automation, and 5G URLLC networks. Our study reveals that TLOF's phased rollout might save expenses for cloud services and staff by 30–40% and 20–25%, respectively. This strategy is worth thinking about since it returns on its investment (ROI) in 12 to 18 months.

The approach establishes a new benchmark for supply chain optimization by integrating realistic 5G rollout plans for warehouses with LSTM predictors that can forecast and reduce Weibull-distributed human delays (shape=1.5). Future research areas in energy-optimized edge architectures and 6G integration facilitate development, while TLOF enables disruptive features such as reliable same-day supply and dynamic disruption reaction, yielding swift efficiency advantages. In smart, real-time supply chains, milliseconds may provide businesses an advantage over their competitors. This work creates businesses ready to lead. Logistics operators use latency research to gain a tested technology base and a strategic plan for its use. To obtain logistics operations ready for a fast-changing global market, TLOF has been rigorously tested using simulations, case studies, and cost-benefit analyses.