Mechanisms for Building Trust in AI-Assisted Justice Systems Through Cognitive, Emotional and Socio-Psychological Factors

Author: Gulyamov S.S.

Journal: Вестник Пермского университета. Юридические науки @jurvestnik-psu

Section: Международно-правовые науки

Article in issue: 3 (69), 2025.

Free access

Introduction: artificial intelligence (AI) is progressively gaining traction within legal and judicial spheres, which necessitates a re-examination of concepts such as transparency, fairness, reliability, and legitimacy. The implementation of explainable artificial intelligence (XAI) is a key element in ensuring trust in such systems, though ‘explainability’ does not in itself guarantee that people will begin to trust these systems. Purpose: the study aims to provide a detailed analysis and systematization of available scientific information on psychological mechanisms underlying the formation of trust in explainable artificial intelligence systems applied in the field of justice, with the cognitive, emotional, and socio-psychological aspects considered. Methods: the study employed methods of analysis and synthesis to explore the complex system of the formation of trust in explainable artificial intelligence in the field of justice. An analysis of a wide range of open sources, including scientific articles, legal documents and research reports, made it possible to categorize facts regarding the perception of computerized judicial decisions and the level of trust in them. Results: the research has established that trust in AI applied in the field of justice develops at the intersection of three key aspects: the understanding of the fundamentals of AI operations, emotional acceptance of the technology, and its adherence to social norms. The author explains the significance of transparency and explainability of AI decisions, of the ability of AI systems to interact with users on an emotional level, and of the role of public opinion and expert examination in trust development. The study has found correlations between perceived accuracy of AI systems and trust levels, elucidated the importance of empathetic characteristics in AI interfaces and the influence of group dynamics on trust formation. Conclusions: the findings demonstrate the need for a comprehensive approach to AI implementation in the legal sector that would consider both psychological and technological factors. The paper provides practical recommendations for increasing trust in AI in justice, including the development of training programs, standardized explainability schemes, and transparent audit mechanisms. Recommended areas of future research include studies into cross-cultural variations in attitudes to AI, long-term strategic consequences and ramifications of justice automation, and the development of methods for assessing the effectiveness of human-AI collaboration in court.

Artificial intelligence, justice, trust, explainability, cognitive factors, emotional perception, socio-psychological aspects

Short address: https://sciup.org/147253716

IDR: 147253716 | UDC: 347 | DOI: 10.17072/1995-4190-2025-69-482-491

Механизмы формирования доверия к системам правосудия, использующим искусственный интеллект: когнитивные, эмоциональные и социально-психологические факторы

Введение: искусственный интеллект (ИИ; англ.: AI) активно внедряется в правовую и судебную сферы, что требует переосмысления концепций прозрачности, справедливости, надежности и легитимности. Внедрение объяснимого искусственного интеллекта (XAI) является ключевым элементом для обеспечения доверия к таким системам, однако сама по себе «объяснимость» не гарантирует, что люди начнут доверять системам. Цель: провести детальный анализ и систематизировать доступную научную информацию о психологических механизмах формирования доверия к системам объяснимого искусственного интеллекта в сфере правосудия с учетом когнитивных, эмоциональных и социальнопсихологических аспектов. Методы: методы анализа и синтеза были использованы для изучения процессов формирования доверия к объяснимому искусственному интеллекту в правосудии. Был проведен анализ широкого спектра открытых источников, включая научные статьи, юридические документы и отчеты исследований, что позволило категоризировать факты, касающиеся восприятия компьютеризированных судебных решений и уровня доверия к ним. Результаты: исследование показало, что доверие к ИИ в правосудии формируется на пересечении трех ключевых аспектов: понимание основ работы ИИ, эмоциональное принятие технологии и соответствие технологии социальным нормам. Статья объясняет значимость прозрачности и объяснимости решений ИИ, способности систем эмоционально взаимодействовать с пользователями, а также роли общественного мнения и экспертной оценки в развитии доверия. Обнаружены корреляции между воспринимаемой точностью систем ИИ и уровнем доверия к ним, обоснована важность эмпатических характеристик в интерфейсах ИИ и установлено влияние групповой динамики на формирование доверия. Выводы: результаты исследования демонстрируют необходимость комплексного подхода к внедрению ИИ в правовой сфере с учетом как психологических, так и технологических факторов. Сформулированы практические рекомендации по повышению доверия к ИИ в сфере юстиции, включая разработку программ обучения, стандартизированных схем объяснимости и прозрачных механизмов аудита. Перспективные направления дальнейших исследований включают изучение межкультурных различий в формировании того или иного отношения к ИИ, анализ долгосрочных стратегических последствий автоматизации правосудия и разработку методов оценки эффективности сотрудничества человека и ИИ в суде.

Text of the scientific article Mechanisms for Building Trust in AI-Assisted Justice Systems Through Cognitive, Emotional and Socio-Psychological Factors

Данная работа распространяется по лицензии CC BY 4.0. Чтобы просмотреть копию этой лицензии, посетите

Artificial intelligence is progressively gaining traction within legal and judicial spheres and constitutes a paradigm shift in the understanding of justice, which necessitates a re-examination and reconstruction of society's perception of issues such as transparency, fairness, reliability, and legitimacy1. In 2019 the United Nations

Office on Drugs and Crime (UNODC) released a report highlighting the potential of artificial intelligence to achieve a better understanding of the nature and mechanisms of judicial processes so as to preclude algorithmic bias, ensure data privacy, and avoid erosion of human judgment in legal decision-making2. Human understanding of the reasoning and operation of such systems is ensured through the concept of explainable AI (XAI).

According to Article 13 of the Artificial Intelligence Act1, high-risk systems must be developed with consideration of all cybersecurity aspects, under appropriate monitoring by human specialists, and specific mechanisms are to be implemented in order to effectively and professionally review the output of such systems. Meanwhile, even the ‘explainability’ feature of artificial intelligence is no guarantee that humans will start trusting AI systems, these based on neural networks, machine learning, deep learning, reinforcement learning, and other technologies covered by the general term ‘artificial intelligence’. The objective of the study is to carry out a detailed analysis and systematization of available scientific information on psychological mechanisms underlying the formation of trust in explainable AI systems in the area of justice, while taking into account cognitive, emotional, and socio-psychological aspects. Cognitive, emotional, and socio-psychological processes play the biggest role in how individuals perceive computerized legal systems and judge whether they are objective, just, and proficient. The present paper is based on what was established in the 2018 European Ethical Charter on the Use of Artificial Intelligence in Judicial Systems2. The Charter calls for adherence to basic principles such as respect for human rights, non-discrimination, security, transparency, and maintaining user control, particularly in sensitive law enforcement areas. Examination of these principles from a perspective of psychological trust mechanisms allows for a greater comprehension of AI implementation issues in the judicial field. An in-depth study of the determinants of trust in AI in the legal field is supposed to make a rich contribution to the debate on artificial intelligence regulation in the legal field. Providing a typology of the determinants offers a foundation for formulating efficient strategies and policies for building public trust in AI-enabled justice systems. This is the way to maintain basic values of a democratic state and ensure the rule of law in the implementation of new technologies in the judiciary.

Methodology

In the present research, the author employed the methods of analysis and synthesis of psychological processes and investigation into the complex process of the formation of trust in explainable artificial intelligence when applied in the justice system. The research methodology underlying this study was guided by international legal documents such as the Recommendation of the Council on Artificial Intelligence of 20193, which point to stakeholder collaboration and evidence-based evaluation when operating, managing, and controlling artificial intelligence. An examination of a wide variety of open sources, such as scientific articles, legal documents, and survey reports [2; 4; 37; 32; 10; 3, 17; 12; 9; 35; 33; 5; 6; 34; 7], as well as other types of research works listed in the references, made it possible to categorize facts concerning perception and the level of trust in computerized judicial rulings. A comprehensive analysis of diverse software tools used in legal practice [8] and review of relevant literature [10; 3; 14; 27; 23] helped to define major patterns and priority areas of research. This allowed for insight into the context of interaction of cognitive, emotional, and socio-psychological factors in the process of development of mechanisms of trust in AI systems in the area of jurisprudence.

Understanding the Principles of AI

An analysis of the level of trust in artificial intelligence systems in the justice sector with cognitive factors taken into account reveals a close relationship between the trust level and the degree of public understanding of the principles of AI operation. This understanding includes a set of theoretical and practical knowledge, as well as awareness of the potential and real application of AI systems in the context of law and legal proceedings. From a theoretical point of view, this includes concepts such as algorithms related to machine learning, neural systems, decision trees. From a practical point of view, this includes legal analytics, decision support systems, case management, etc. As various studies show, there is a positive correlation between the results that artificial intelligence systems produce and the level of trust in them [1; 29; 11].

In addition, according to studies, information-literate people demonstrate a 30% higher level of trust compared to those who do not have this level of knowledge or are in a state of digital nihilism4. ‘Digital nihilists’ can be described as people who tend to exaggerate fears and risks associated with such phenomena as handling artificial intelligence, using digital services, banks, transactions, cryptocurrencies, and other benefits of our century, while those fears are unfounded and not supported by practice.

The level of knowledge and understanding of the functioning and intended purpose of AI systems can vary significantly. The nature and degree of the correlation between such literacy and trust in AI depend on the specifics of a particular system and the context of its application in the legal sphere.

A good example here is Estonia, where population demonstrates a high level of digital literacy and where public trust in digital technologies, according to mass surveys, is around 80%1. This case convincingly shows that for building trust in justice systems that are based on electronic legal proceedings, it is, first of all, necessary to take measures aimed at improving digital literacy in the population so as to leave no room for prejudices, distortions, and fears.

Assessing the Accuracy and Reliability of an AI system

AI systems can operate in different ways, and the public perception of how accurately and reliably a particular AI-assisted system operates ultimately influences public trust in the judicial system as a whole. The factors behind positive perception are algorithmic accuracy, lack of bias, risk mitigation, resilience to cyberattacks, and adherence to program protocols when ensuring security. An empirical analysis shows that there is a very strong correlation between how effective a system appears to be and how much it is trusted. A negative example here is a system called COMPAS [17], which assesses the potential risk of recidivism. Although this program has been implemented in many organizations, public trust was low because many of the results were very inaccurate and unfair, violating the principles of ethics, mutual trust, non-discrimination, etc.

According to other studies, systems that achieve the trust level of at least 50% work very accurately, and all reports and statistics indicate that their violations and failures in operation reach almost 0% [12]. This suggests that information that is transmitted to interested parties and disseminated to the masses must be provided on terms of high transparency and accuracy, since there is a strict correlation between the accuracy of an AI system’s operation and the level of trust in it.

The Perception of Explainability of AI Decisions

Explainable AI is a versatile solution to build trust in law enforcement and judicial processes. This concept enables the development of frameworks for analyzing AI outputs that support human decision-making and interpretation in legal contexts. In addition, research shows that there is a strong correlation between how explainable and understandable an AI is and the level of trust that users have in it [9].

AI systems applied in legal contexts must incorporate mechanisms that provide compelling evidence and justification for their decisions. This conclusion suggests itself after one of the studies where participants were provided with two systems functioning on different technologies: one worked on the basis of complex generative neural networks, and the other – on the basis of a simpler interpretable and understandable artificial intelligence based on decision trees. As this study showed, among the participants who used AI systems for legal applications, the simpler system, which produced more understandable results, while maintaining the same level of accuracy and fairness, inspired 60% more trust than the technically complex but less comprehensible system [35].

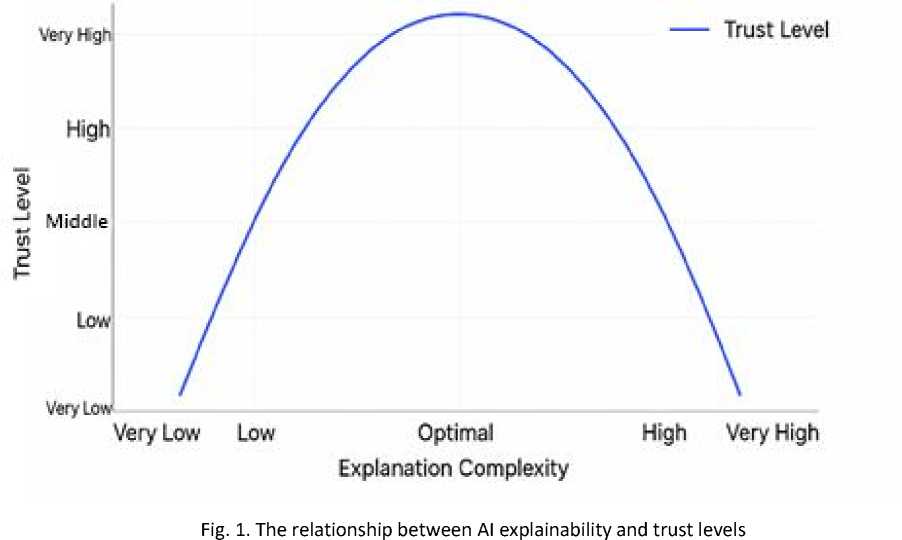

As shown in Fig. 1, there is a direct relationship between the level of public trust in AI systems and the level of complexity of the system, including its interpretability, up to the point where the system becomes too complex to understand, after which the relationship becomes inverse and the level of trust begins to decrease accordingly.

There is another system, called HART (Harm Risk Assessment Tool), which helps to understand whether the operation of an AI system is sufficiently clear for humans. [33]. It was developed by Dutch scientists and shows what approach should be taken to the transparency and interpretability of artificial intelligence results, and also selects which developers, working in a particular field, especially those related to the judicial and legal field, should be involved.

However, in some cases, there is no strict correlation between perceived competence, interpretability, explainability, on the one part, and trust level, on the other part: as some research findings show, if a system is not sufficiently complex, while the task being solved requires a high level of detail, the solutions that are used in simpler artificial intelligence systems will not be suitable [5]. Therefore, it is necessary to find a balance that would satisfy both the developers and the users and would promote user trust in artificial intelligence, which is possible to achieve when users understand the principles on which the AI system is built and when it is guaranteed that its results will not violate the ethical and humanistic principles. Therefore, it is very important to establish approaches to designing systems that would allow interaction between artificial intelligence and people, and to develop flexible interpretation mechanisms that would produce an analysis adapted to the complexity of the system and to user needs.

Emotional Aspects of Trust in AI in the Legal Sphere

Fear of Technology and Automation

The level of trust in artificial intelligence systems related to the justice system is strongly correlated with the concept of technophobia, or fear of using technical means. It is a psychological phenomenon manifested in cognitive-affective reactions to threats perceived by people from innovative technologies. As various studies show [6], there is an inverse relationship between how severe a person’s technophobia is and what is the level of his/her trust in certain solutions that are applied with the use of artificial intelligence technologies. One of the striking examples to be given is the Austrian pilot program [34] that helps analyze documents in administrative courts using artificial intelligence. At first, there was strong resistance, and people did not trust this project as they were afraid that artificial intelligence systems would not be able to cope with those legal interpretations that they considered subtle, sensitive, and complex. However, thanks to the advertising campaigns conducted by the organization and to public involvement, it was possible to achieve impressive results: the level of citizens’ trust increased by more than 40% over 6 months of the campaign [7]. This example clearly shows that the emotional resistance at the first stages of the introduction of an AI system, including a system that shows excellent accurate results, is a common phenomenon. Thus, the emotional aspects related to the acceptance of such technologies must be worked through gradually and with due understanding.

Empathy and the Perception of the ‘Humanity’ of AI Systems

AI systems that integrate anthropomorphic characteristics into their machine learning mechanism may be designed more effectively under the Computers Are Social Actors (CASA) paradigm. This concept holds that people tend to trust systems that demonstrate socially acceptable behavior and empathy [24]. Perceived empathy levels are highly correlated with levels of trust in legal applications. An example is an AI-powered online dispute resolution system in British Columbia [30]. This system uses sophisticated natural language processing specially designed to consider emotional nuances and criteria, which has been shown in research to increase user satisfaction by over 55% compared to conventional online systems [38]. The software showed to be more stable, and the level of perception of emotions and the nature of reactions of certain users played a significant role. This case makes it clear that integrating elements of emotional intelligence into an AI system with contextual awareness increases the level of trust in its application in the law enforcement sector. Therefore, humancentered AI that can navigate the incredibly complex landscape of basic emotions in such a challenging field as legal disputes is becoming increasingly in demand.

Emotional Attitude Toward Fairness of AI Decisions

Fairness is often subjective. Meanwhile, from the perspective of trust formation it is essential that AI systems provide decisions devoid of bias and discrimination. Algorithmic fairness, which is the result of applying a program that ensures technical accuracy and analyzes the results taking into account the ‘past direction’, i.e., various past cases in the relevant area, as well as cultural traditions, social ideas, and other such aspects, should be the focus of attention in such an area as justice.

Practice provides many questionable examples. For instance, facial recognition systems used in the UK, though being highly effective, showed discrimination against ethnic minorities, which suggests that biases and discrimination were perpetuated. This case tells us that such factors should become a focus of unprecedented attention, and that, before implementing a product in practice, a series of tests is to be carried out, with developers, participants, and testers coming to a conclusion that the system works without such gaps. As another study has shown, transparency with regard to how many potential biases were eliminated during beta testing, as well as what data was provided to train artificial intelligence, improves the accuracy of its results up to 70% [15].

All this suggests that it is necessary to build holistic approaches to systems based on the principles of fairness and transparency taking into account the factors that influence the level of public trust in them (Table 1), and also to develop algorithms that can be interpreted and explained to society [39].

Table 1

Factors of trust in AI when applied in justice

|

Factor |

Impact on trust level |

|

Perceived empathy |

Significant increase |

|

Transparency of algorithms |

Significant increase |

|

Expert approval |

Noticeable increase |

|

User training |

Strong increase over time |

|

Interpretability and understandability of AI |

Significant increase |

|

Technophobia |

Significant reduction |

|

Pre-testing in other areas |

Significant increase |

|

AI as a complement to human labor |

Moderate increase |

|

Algorithmic fairness |

Significant increase |

|

Social norms and expectations |

Moderate to strong increase |

|

Group dynamics |

Moderate to strong increase |

Socio-Psychological Factors of Trust in AI in Justice

The Influence of Social Norms and Expectations

Social expectations and norms are an important factor in ensuring trust in such a complex phenomenon as artificial intelligence when applied in law enforcement. Social norms and expectations influence how people perceive and adopt technologies. There is a strong correlation between how society accepts artificial intelligence based on certain technologies in other areas and how society will treat artificial intelligence systems operating on similar technologies in law enforcement. For example, this has been proven in practice in Singapore [20], where relevant programs used intelligent case management systems that had previously been tested in other areas, and these programs gained a more than 65% higher level of trust than a system that had not previously been tested in practice [31].

This suggests that before introducing AI systems in areas such as litigation, it seems advisable to test them in less sensitive areas, such as law enforcement. Artificial intelligence should exist in close connection with legal processes, while, as studies show, the level of trust in AI systems increases by 40% when the system of neural networks and other technologies is an addition to human work, and not a replacement for it [19].

The Role of Authorities and Expert Opinion

An analysis of the opinions of authoritative experts who have a significant influence on the public perception of AI systems points to the significant role of epis-temic trust in various contexts of AI application in the legal field. In addition, as showed an analysis of the literature, the endorsement by an expert council and authoritative figures in the field of artificial intelligence has a very strong effect on strengthening the level of public trust in explainable AI systems. For example, PROME-TEA, which is an Argentinian program implemented in courts and prosecutors' offices, was first treated with great skepticism, but was highly praised by senior prosecutors and experts in the ethics of artificial intelligence [8]. Public trust in this system increased by more than 50% after a series of open seminars and lectures were held under the guidance of expert councils, explaining what the tasks of this system are, what tasks it can perform, what security measures are provided for its functioning [30].

Research also shows that criticism and negative feedback have a detrimental effect on trust levels, suggesting that a balanced approach and fair judgment of AI systems is needed.

Group Dynamics in the Perception of AI systems

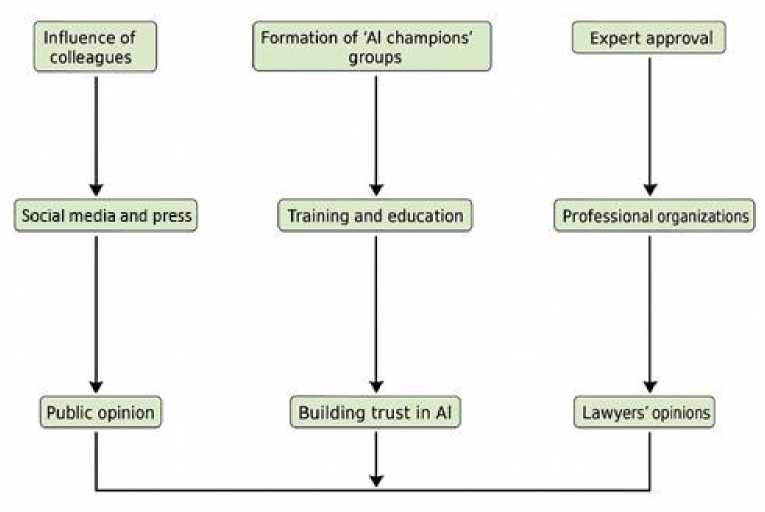

Group use of certain AI systems is a phenomenon suggesting that the polarization of group tendencies and identity in a group can shape certain judgments and create a certain attitude and level of trust in explainable artificial intelligence in the field of justice (Fig. 2).

For example, the above-mentioned HART system was first accepted with a negative attitude, but after a course on the use of this system, where all its functions, shortcomings, advantages, and disadvantages were elucidated, the level of trust among officers increased by 60% over about 1 year [25]. This suggests that there are certain group dynamics, and the level of trust in a system can decrease or, conversely, increase, depending on how well-developed the AI system is and whether the users have managed to master the technology. In addition, advertising, mass media, and social media have a considerable impact on trust since collective perception is a highly important factor in today’s world. For example, there are various communication strategies and marketing moves, with the involvement of various parties, persons, authoritative sources, and other mechanisms, that help to impact certain groups in a certain way so as to form a certain attitude and level of trust.

Fig. 2 clearly shows that there are interrelated factors influencing group dynamics in the perception of modern technologies, including AI, that determine and shape overall trust in AI systems.

Fig. 2. Group dynamics in the formation of trust in AI

Transformation of Legal Consciousness under the Influence of AI

Changing Ideas about Justice

The modern world is undergoing a technological-legal transformation, which is manifested in the formation of an interaction between human judgment and decision-making with the use of algorithms. A good example is the e-justice system in Estonia, which has undergone a multi-stage development and implementation process. According to surveys, more than 70% of Estonian citizens rate this system as fair. A public opinion analysis also shows that the majority of respondents consider traditional justice systems without the use of artificial intelligence to be outdated and ineffective as the only instrument for administering justice [22]. Therefore, the level of digital trust is very high in this country. However, the population of many other countries, even technologically advanced ones, demonstrates a commitment to the idea that only non-auto-mated systems represent justice. All this refers us to the concept of digital legal acculturation, which suggests that the formation of legal consciousness in the field of digital technologies affects the level of trust in general and shapes the attitude toward the effectiveness of certain systems that can complement justice with artificial intelligence in a given country.

The Evolution of the Concept of Legitimacy of Judicial Decisions

The legitimacy of judicial decisions, as a concept, is also undergoing a number of changes. There has emerged a concept of algorithmic legitimacy, which, in turn, reflects the emergence of a hybrid concept of legal authority, this combining human judgments formed by specialists and knowledge that is provided by artificial intelligence and supplements human knowledge.

For instance, in France there has been implemented a case management system called Predictice [21]. It is an AI-based platform that helps analyze historical court decisions to predict and create predictive models for various types of legal research. Initially, this system was treated by the legal community with some skepticism. However, after an information campaign was run to explain the benefits of the system and its further improvement, the level of trust has increased significantly. According to research, 65% of the surveyed lawyers noted that AI technologies effectively contribute to legal research, both from a scientific and from a practical point of view.

This example shows an expansion of the concept of legitimacy: artificial intelligence represents a combination of human and machine interaction, while functioning on objective data, and all this forms a new era based on the construction of objectivity and trusting relationships between machines and humans in a hybrid form.

Practical Recommendations for Increasing Trust in AI in Justice

Increasing trust in justice systems based on artificial intelligence involves a number of theoretical and practical recommendations that should be based on strategies taking into account various practical aspects [28]. When implementing comprehensive training programs devoted to the use of artificial intelligence in the legal field, it is essential that the principles of AI operation and its limitations, as well as the approach of explainable AI itself, be explained taking into account the principles of transparency and fairness [13; 36].

Standardized explainability schemes suggest that AI applications in the judicial field should facilitate understanding and transparency. In addition, a design that incorporates emotional intelligence, empathy, and various other aspects in the interface of AI will also help build trust and enhance the ability of stakeholders to participate in the development of the system [11]. Furthermore, transparent audit mechanisms, ongoing reviews, international cooperation can help strengthen the creation of diverse points of view on improving user acceptance. It is also important to emphasize the need to follow pro-regulation trends worldwide, to adhere to global standards and codes that promote differentiation and consistency without violating these principles embedded in the system of artificial intelligence in the field of justice. These recommendations are aimed at creating a reliable system that could increase the level of trust in systems based on artificial intelligence technologies.

Discussion

The psychological mechanism of the formation of trust in AI systems within the legal field involves a sophisticated interaction of cognitive, emotional, and so-cio-psychological factors [18; 26]. Research findings show that public confidence in the use of AI systems in the judiciary depends not only on the technical efficiency of such systems but also on whether they meet social expectations and moral standards. Another central component is the explainability of AI, which is when users can see the rationale behind the decisionmaking. Such users’ understanding and awareness subsequently facilitate the establishment of trust since users can make rational judgements concerning the system’s competence. Research shows, however, that complete trust is usually unattainable due to the inherent limitations of purely rational assessment of AI systems (people cannot fully rationally evaluate all aspects of operation of complex AI systems, which prevents complete trust formation) [16]. Emotional factors, such as the ability of an AI system to empathize emotionally, are essential in establishing emotional trust. This makes it necessary to develop AI systems that can not only effectively address legal matters but also empathize with humans.

Particular attention should be given to socio-psychological processes behind the formation of trust in AI justice. Studies find social norms, group behavior, and influential voices to significantly influence the development of trust in machine-based mechanisms of justice.

This calls for an overarching approach to introducing AI in the legal system, considering not only technological but also social contexts. One of the important areas for future research is investigation into the longterm impact of AI implementation in justice on the attitudes developing in the general public with regard to fairness and legitimacy of the judiciary. Another potential research area is a deeper examination of cultural variations in the perception of AI in the legal field, which can be useful for the development of more universal and adaptive systems. Particular attention should be given to the question of how the combination of the human element and automation in judicial decision-making influences public confidence in the justice system as a whole. Thus, there is a clear need for additional interdisciplinary research integrating expertise in the areas of psychology, law, and information technology.

Conclusion

The research conducted is a comprehensive study into psychological mechanisms of building trust in explainable artificial intelligence within the legal system and legal process. Based on systematization and critical analysis of available scientific information, the paper reveals the main cognitive, emotional, and socio-psycho-logical determinants of public perception of AI systems in the legal context.

The research findings indicate the need for a multidimensional strategy toward the AI development and deployment in the legal field. The main components of this strategy should include transparency and explainability of AI systems, consideration of emotional parameters of interaction with users, and adherence to social norms and values. The paper demonstrates that trust in AI in justice is established at the confluence of rational comprehension, emotional acceptance, and social approval.

It is necessary to develop general approaches for evaluating and enhancing the performance of human-AI collaboration within the legal process. This should involve the formation of mechanisms for increasing the degree of trust in hybrid decision-making systems and the development of training programs for legal professionals and the general public on the best modes of interaction with AI systems in the legal environment.

The future also looks promising for research on the ethics of the use of AI in justice, including the topics of algorithmic transparency, personal data protection, and algorithmic discrimination prevention. This field of research is highly correlated with the development of a normative set of rules governing AI application in the judiciary.

Another major field of future research is the examination of psychological mechanisms of society’s adaptation to the growing involvement of AI in the legal field. This involves research on the transformation of legal consciousness of citizens, on their expectations from the judicial system, and on their readiness to trust technological solutions in the matters of justice.

All of these areas of research are important for ensuring harmonized development of AI in line with ethical standards and moral values. Such studies are expected to facilitate the formation of durable trust in AI systems in the field of justice through providing a foundation for the successful introduction of technologies in the legal sphere and safeguarding fundamental principles of justice and the rule of law in the digital era.